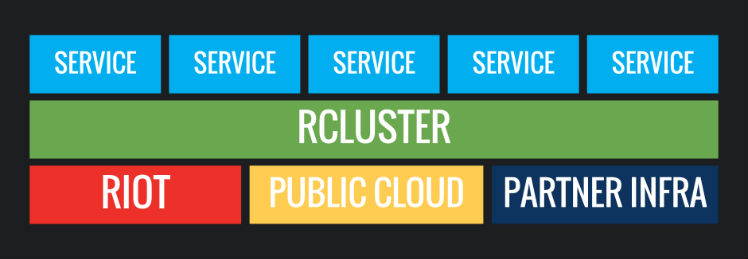

Leveling Up Networking for A Multi-Game Future

Heyo! We’re Cody Haas and Ivan Vidal, and we’re engineers on the Riot Direct team. It’s been a while since you’ve heard from our team. So in this article, we’re going to tell you a bit about what we’ve done to reinforce consistent and stable connections, reduce latency, and improve the overall player experience for our entire multi-game portfolio.