Engineering Esports: The Tech That Powers Worlds

Lights, camera, action... and cables?

Very few people understand the infrastructure that forms the backbone of major esports events, and even fewer talk about it.

We’re the Esports Technology Group, and we’re responsible for the tech behind Riot’s biggest esports events, from reliable network connectivity to global broadcast capabilities to specialty tournament servers to the custom PC fleet used by pros. Part of our role at Riot is to approach typical broadcast and live production challenges with scalable and technology-driven solutions.

In this article, we’ll be giving you a peek behind the curtain at some of the specialized tech we’ve built over the years to successfully put on our biggest event of the year. Our nomination for the IBC 2019 Innovation Award got us thinking about sharing more about the complex technical backbone of the League of Legends World Championship.

League of Legends World Championship Finals 2017, Bird’s Nest, Beijing - photo: Lolesports Flickr

The biggest technical challenges we face for esports events fall into three main categories - networking, hardware, and broadcasting, and these get more complex when you consider the remote and local implementations of each. This article will describe each of these problem spaces and walk you through our solutions.

Global Infrastructure and Remote Networking

Live shows that operate at a scale as large as Worlds require significantly more network capacity than typical events. Furthermore, hosting an event based on an online video game adds an extra layer of complexity compared to a live concert or a traditional sporting competition due to the inherent needs of the game itself. Having a reliable network connection is key for a competitive multiplayer game such as League of Legends, and it can be a challenge sourcing that capacity. By doing intense research into providers, securing quotes, installing circuits, setting up high-speed point-to-point connections with the help of Riot Direct (Riot’s ISP), and working with local networking teams, we’re able to construct a dependable networking framework that meets our highly specific needs.

League of Legends World Championship Finals 2016, Staples Center, Los Angeles - photo: Lolesports Flickr

Finding The Right Networking Providers

To support a stable connection that won’t drop out right before a sweet Baron steal, our teams conduct fiber surveys to discover nearby fiber optic backhauls a full year before we even set foot at a venue. Our shows have very specific network bandwidth needs that can only be satisfied by select providers. We generally look for at least two high bandwidth circuits per venue with diverse paths to ensure redundancy, because our shows happen all over the world, we work with local partners to make sure we’re finding the right providers that satisfy our requirements. And with some shows spread across 4 or more stages, we regularly find ourselves coordinating 8 or more circuits with several providers.

Providers don’t always share their available fiber counts and locations, so it's a bit like finding a needle in a haystack. The research process generally starts either at the venue we’ve chosen for the event or at a local data center.

League of Legends World Championship Finals 2018, Munhak Stadium, Incheon - photo: Lolesports Flickr

Here’s the problem - most traditional events that use these venues, like regular sport matches, concerts, and conventions, don’t require high-bandwidth point-to-point connections to particular data centers. This means accurate data is hard to come by and even harder to come by quickly. Starting a year ahead of Worlds gives providers the chance to sort through their systems and evaluate what they can actually offer.

This is where it gets even more complex - we’re not just looking for internet circuits. We’re looking for very specific, high capacity, reliable point-to-point circuits that can get us onto Riot’s private internet backbone: Riot Direct.

Riot Direct

At Riot, we operate our own global internet service provider called Riot Direct. With multiple terabits of edge capacity, it’s a resource we trust and enthusiastically leverage for the most reliable network. We find the most strategic location to connect to our venue using a list of Riot Direct PoP’s (points of presence).

At first glance, it would seem logical that the nearest PoP is the best option, but this isn’t always the case due to variables like specific capacity and geographic limitations. At our recent 2019 Mid-Season Invitational in Hanoi, we used a connection to Singapore instead of a PoP in Vietnam due to capacity constraints and limited capabilities of Vietnamese infrastructure.

In order to connect to Riot Direct, we carefully select diverse routes for our traffic to traverse, avoiding any shared PoPs or long-haul fiber routes. For example, at MSI 2018 in Paris, both of our routes connected to Riot Direct in Amsterdam, a major data center for League of Legends, but they did so through wildly divergent paths. One routed through downtown Paris, while the other took a longer route through Frankfurt, Germany, and finally on to Amsterdam. Our ultimate goal is always to survive a fiber cut anywhere along either path (this has happened!) by dynamically routing traffic over the best path and failing over all our traffic in a few tenths of a second.

Once we establish our connection to Riot Direct, our communication and transmission equipment connects back to Los Angeles. We rely on hundreds of applications to produce any event, and one of the most important systems is our intercom and communications systems. Each team has an intercom panel or VoIP device so they can communicate locally and with our production staff back home in Los Angeles, which is why it’s one of the very first applications we bring online. Quick to follow are video encoders, decoders, and other signal transmission devices and applications. Ultimately, our goal for remote production is to seamlessly link our production facilities with our show, whether it’s in the same building or 6000 miles away.

With connectivity squared at the venue and our path back to LA sorted out, we set about getting our local teams online and running at the venue.

Local Networking

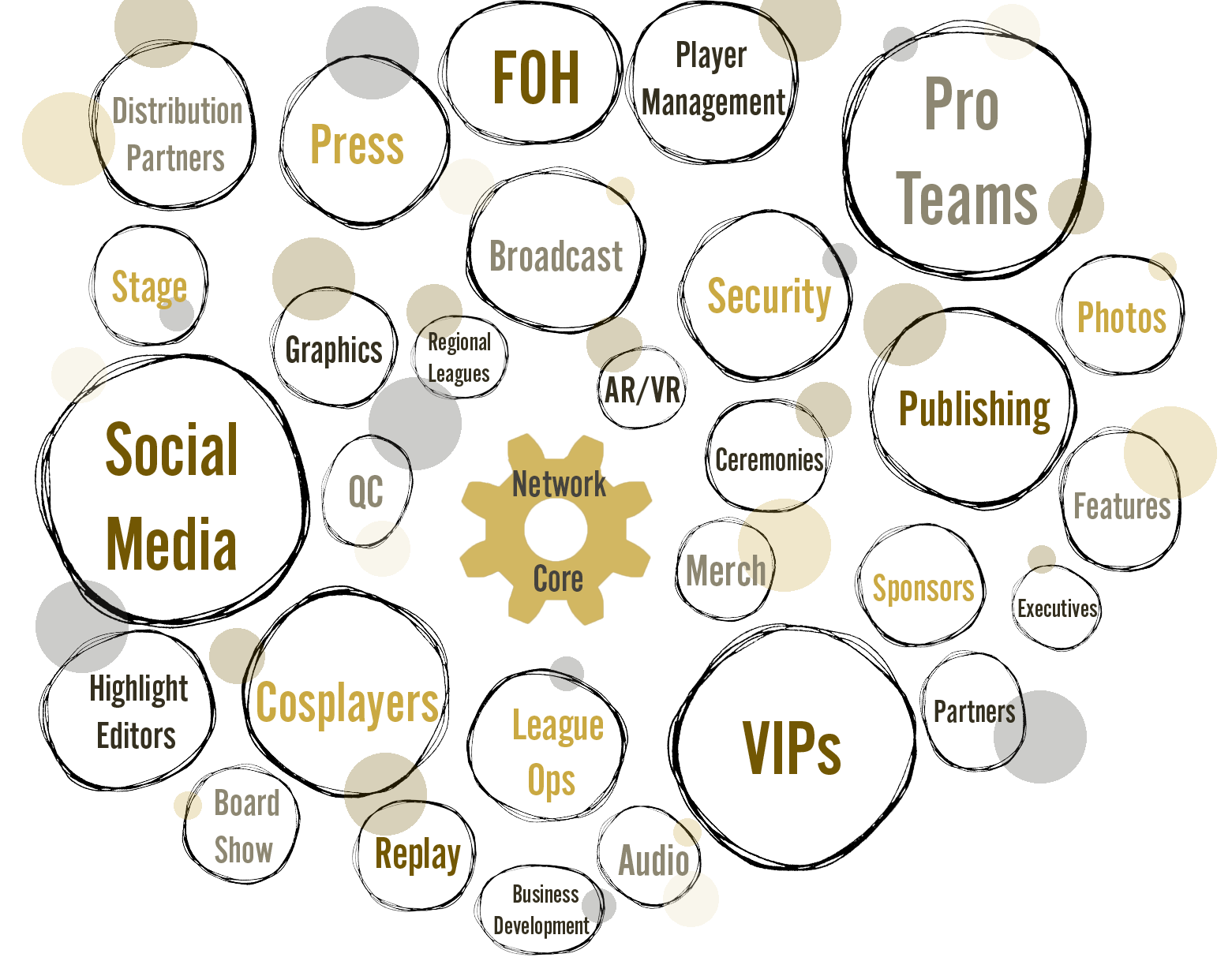

Imagine a small city with hundreds of tenants all needing access to specific resources in order to live their day-to-day lives. That’s a great analogy for a major esports event put on by Riot - various teams, from merchandise to broadcast to the teams that design our intricate opening ceremonies, work together to create our massive live events, and all of them require certain technical tools as well as reliable networking to do their jobs properly. It’s our responsibility as the Esports Technology Group to provide that ubiquitous access.

Essentially, we have to stand up a network capable of supporting a medium-sized business a few days before the show starts. Once the show ends, we have to be able to efficiently tear it down right after the closing ceremonies in just a few hours.

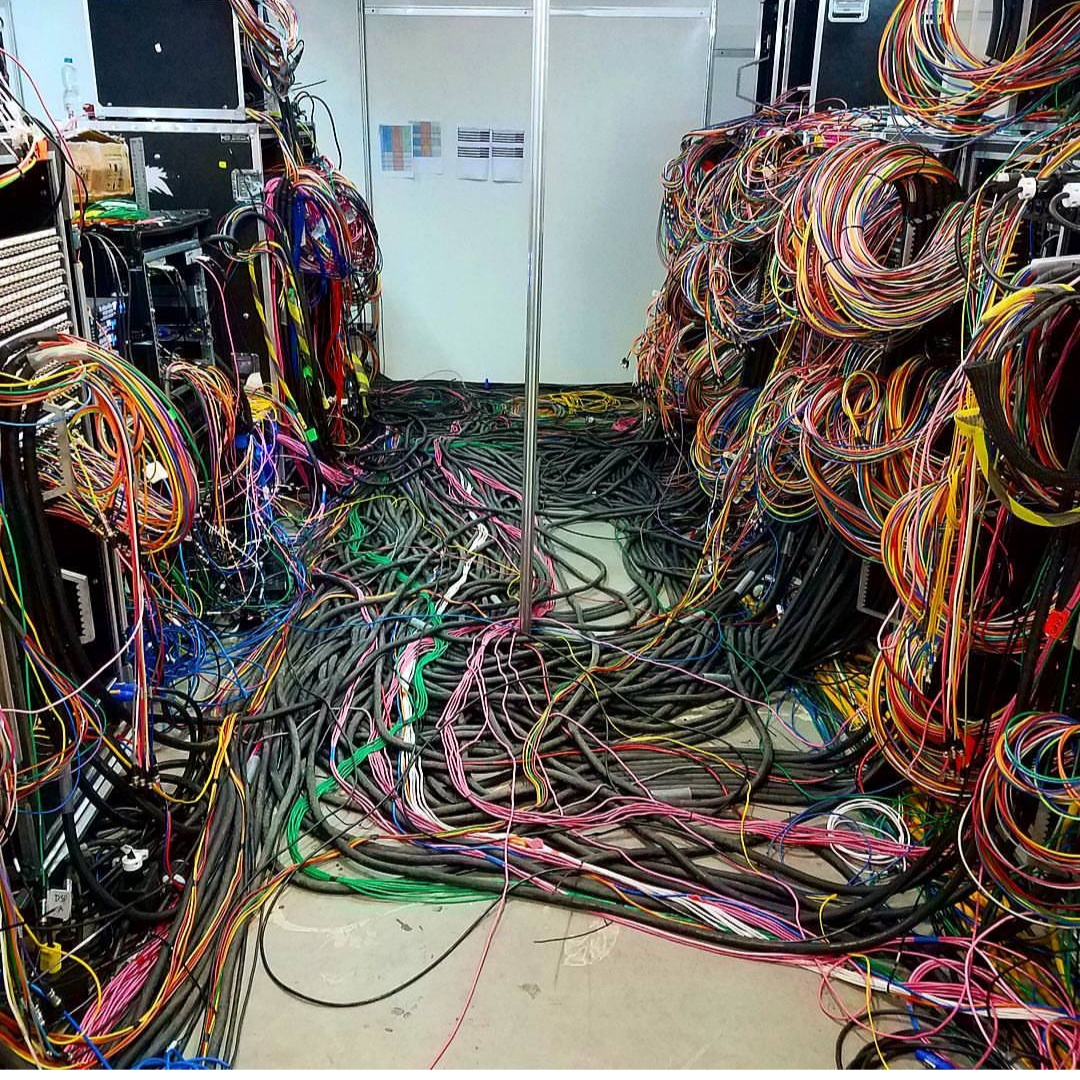

Fiber Optics

It all starts with fiber optic cables running throughout the venue to every conceivable access location. These fibers run from our central network hub buried deep within the venue. From this location, we deploy dozens of fiber optic cables and copper cables. This allows us to build a fully functioning, specialized, high capacity infrastructure at any venue in the world. Depending on the age and existing infrastructure of the venue, setting this up can be simple (patches within a venue’s IDFs and fiber from MDFs) or a completely manual and bespoke process (imagine hundreds of copper and fiber runs through the entirety of the venue). At most shows we deploy miles of cable between our various teams.

One of the most difficult and interesting fiber runs we’ve attempted was during the 2014 World Finals in Seoul, South Korea. Network connectivity was required outside the stadium to our partner activation area and merchandise store, as well as to a set of ticket booths even further away. There was no existing infrastructure that we could leverage, requiring us to run our own. The area was enormous and close to the main entrance, so we had to keep the fiber safely out of the way to avoid tripping our fans. The long distance caused a lot of head scratching, pointing, and creative ideas. Ultimately, the route required a path for the cable high above the crowd, several trips in and out of drain pipes and gutters, and even a trip down a staircase. The total fiber run for that one area was nearly 3000 feet.

Of course, not all of our onsite tenants require the same level of access or priority. While some teams may just need internet access to check and answer email and Slack, other teams have much more demanding needs. For example, our Features team, who are responsible for the teaser videos you see on the broadcast, have to turn around their videos between show days, which means they need extra bandwidth to upload hundreds of gigabytes of high quality video content overnight.

To accomplish this, we set up several different specialized virtual LAN extensions and enforce different tiers of service to each of our onsite teams, with different rules set up for everyone, from broadcast engineering to press. At our finals in Korea last year, we ended up supporting over 1200 devices for everyone who worked at the show.

However, when it comes to accessing our network infrastructure, the groups that have highest priority are our professional players. When they go on stage, they play on a customized ecosystem carefully designed for professional play, and optimized for latency and performance.

All of the network infrastructure we’ve described so far sets the foundation for our broadcast and enables our players to compete at the highest possible level - with the most reliable connectivity that we can guarantee. But no League of Legends tournament would be possible without the game itself.

A typical League of Legends shard - the ones that you connect to when you fire up a game of League at home - contains hundreds of subsystems, microservices, and features designed to cope with the massive scale of our global player base. When a player logs in, most of these subsystems and microservices exist within the “platform” portion of our game (where you authenticate, enter the landing hub of the client, choose your champion and skin, shop, and manage your account). However, once a player starts a game and completes champion select, they connect to a game server. Game servers are located all across the world in data centers. At last count there were more than 20,000 game servers globally.

The shards we use for competitive events are very similar to the shards used by the rest of our (non-professional) players, but there are a few key differences. Imagine fans in an arena watching the last game of a full five game series, and the pro players suddenly jump up to celebrate while the audience is still watching an inhibitor push. This is a subpar experience - we want fans to be able to experience the game with our pros in real-time. Our spectator system is set up to connect directly to the game server rather than going through the grid-based system set up for online League of Legends shards. This allows for near-zero delay when spectating the game.

Our systems require a global network

However, connectivity over the internet still introduces risks that we can’t control, and League of Legends is a game that heavily relies on low latency and a flawless connection for a smooth playing experience. Since League sends data through the UDP protocol, packets could still get dropped if we were to use a traditional internet connection for the game process.

This is why our specialized ecosystem is vital for ensuring high confidence in the integrity of the competitive nature of League. Professional players at our major events play on a hybrid type of shard connected to our offline game servers. This ensures no risk of network lag or packet loss during competitive play.

Offline Game Servers

Latency and packet loss are the biggest concerns we (and the pros) have when it comes to ensuring a fair competition. Since our events can be held anywhere, it is vital that we are able to provide a stable, low latency environment at all major competitive events, regardless of location. Because our offline game servers are portable, they can function completely independently of the internet and feature near-zero latency at all times.

Here’s how our offline game server system works. Onsite at a major tournament, we have a few members of the Esports Technology Group. Engineers from systems, network, and security teams stand up a local area network during the first few days of the load-in phase, where we prepare the venue, build the stage, and customize the space for our event. This network consists of VLANs built for different hardware, services, and applications necessary to conduct the show. Game servers, for example, are highly protected and therefore exist in a guarded tier with as little inbound access as possible allowed. For example only a small, select group of users can access these game servers.

Our offline game servers run a very highly modified operating system. Due to the nature of our game, deterministic performance of our hardware is vital. To this end, we disable any CPU clock boosting functionality as it can lead to non-deterministic performance during processor throttling states. Additionally, we disable any chip-level power-saving or hyper-threading. Until 2018, we ran those offline game servers virtually, but due to a recent change in League’s backend protocols, we made the move to bare metal for our portable game servers.

Being able to connect to the game is not enough, though. Obviously we need more than just game servers to make a tournament happen. We also need the PC hardware to actually play the game.

Local Tech and Hardware

Consider the sheer scale of hardware required to facilitate a show like Worlds. The venues we use are usually empty prior to our arrival. Let's take the buildout of a typical practice room - which are the rooms we set up for pro teams to scrim in - and examine each piece that goes into it.

Practice room at LCS HQ in Los Angeles - - photo: Greg Crannell

A typical practice room at Worlds contains seven player stations, each consisting of an Alienware Aurora R8, a 240-Hz gaming monitor, and a Secret Lab gaming chair. We drop a shared network switch to connect all the machines and allow teams to either scrim on the offline game servers or online on a regional shard.

Did you know?

Each practice room also has a special TV with internal, private program feeds capable of being changed to reflect a team's needs (custom video feed, spoken language, team audio during games, etc.).

PC Fleet

We set up a private practice room for each of the 24 teams who participate in Worlds.

Let’s do some simple math. Seven PCs per practice room, over 16 concurrent team practice rooms, 10 stage PCs, dozens of spares (in case any of our PCs malfunction), observer PCs... and the list goes on. All in all, we have over 140 sets of PCs and monitors. We have to image every PC in this fleet before each major show to account for Windows and security updates, and we have to update custom drivers for each of the professional players in the tournament - and the players change per tournament. Managing our PC fleet is a monumental task, especially considering the scale we’re operating at.

Shipping cases being loaded for Worlds - photos: David Chan, Chris Ward

Competitive Stage Setup Specs

Alienware Aurora PC

9th Gen Intel® Core™ i7 9700k

16GB Dual Channel DDR4 XMP 3200Mhz

NVIDIA® GeForce® RTX 2070 OC with 8GB GDDR6

512GB M.2 PCIe NVMe SSD

Alienware 850 Watt PSU with High Perf Liquid CoolingAlienware 25 Gaming Monitor

240Hz native refresh rate

FullHD (1080p) 1920x1080

1ms response time

400 cd/m²

Onsite Streaming

Now let’s take a look at the in-house TV feeds. The traditional approach to this problem in broadcast circles is to run coax cables to every room requiring a feed. This is expensive and hard to scale, so one of our engineers tackled this problem by using a customized IPTV system.

Running a custom build of Linux, a Raspberry Pi is deployed in each room in the venue that needs a feed displayed on-screen. The Pis are controlled by a custom-built management server that dictates which stream to display since we typically produce over 20 full production streams concurrently. Having the flexibility to select streams per room is essential for running a smooth show, in part because we’re actually producing dozens of localized broadcasts for our international audience. One room could be used by Chinese casters who need to watch the Chinese stream, while the room next door could be the NA Features team who listen to the show in English. Fun fact - we even 3D print our own custom set top box cases!

Remote Broadcast

All the hard work and complex technical solutions we’ve discussed so far still wouldn’t make for much of a show without a way to broadcast it around the world. Over the years, the number of people required onsite to properly produce a major League of Legends event has gone up and up - until a year ago, when we deployed our second generation of our remote production transmission kit. While we’ve been employing at-home broadcasting methods since 2014, 2018 marked a significant shift in our capabilities with the new kit, which uses a tenth of the bandwidth and allows for a much smaller onsite footprint. This new solution enabled the majority of our crew to stay home while producing the show with no loss of quality.

Onsite production footprint - photo: Michael Caal

Engineering monitoring station for encoded feeds - photo: Michael Caal

Up until a few years ago, an event as large as the League of Legends World Championship would be produced using typical broadcast production trucks. Think semi-trucks with pop-out bays supplied with video equipment, servers, and audio booths. These were designed to create a broadcast from multiple unique sources such as cameras, microphones, and graphic engines, which would be composited together into a video feed sent around the world.

Riot’s team of broadcast engineers spent several years designing and refining the methods by which we create our Worlds feed, which is the cleaned-up feed we send to dozens of countries across the world for them to customize for their local audiences. Not only do we need to produce the feed, we manage hundreds of video and audio channels that need to be routed correctly to their corresponding destinations. The goal of having so many custom channels is to allow more regions to produce the show remotely using cameras, audio, and game feeds from around the world without the added cost and logistics of flying regional staff & partners onsite. We collaborate with many international broadcast production partners and over 40 distribution platforms.

Maxwell Trauss, Technical Producer with Korea Broadcast Team - photo: Michael Caal

Distribution partners for Worlds 2018

This has opened up completely new opportunities to allow smaller regions to create specific content tailored to their show. With detailed 360-degree venue maps, which are created months in advance, teams can now simply request any number of robotic cameras around the venue to better understand the available camera shots and angles. The broadcast team can then design and execute remote transmission, operation, and painting of the robotic camera feeds to, let’s say, a studio in Brazil at the same time as a studio in China.

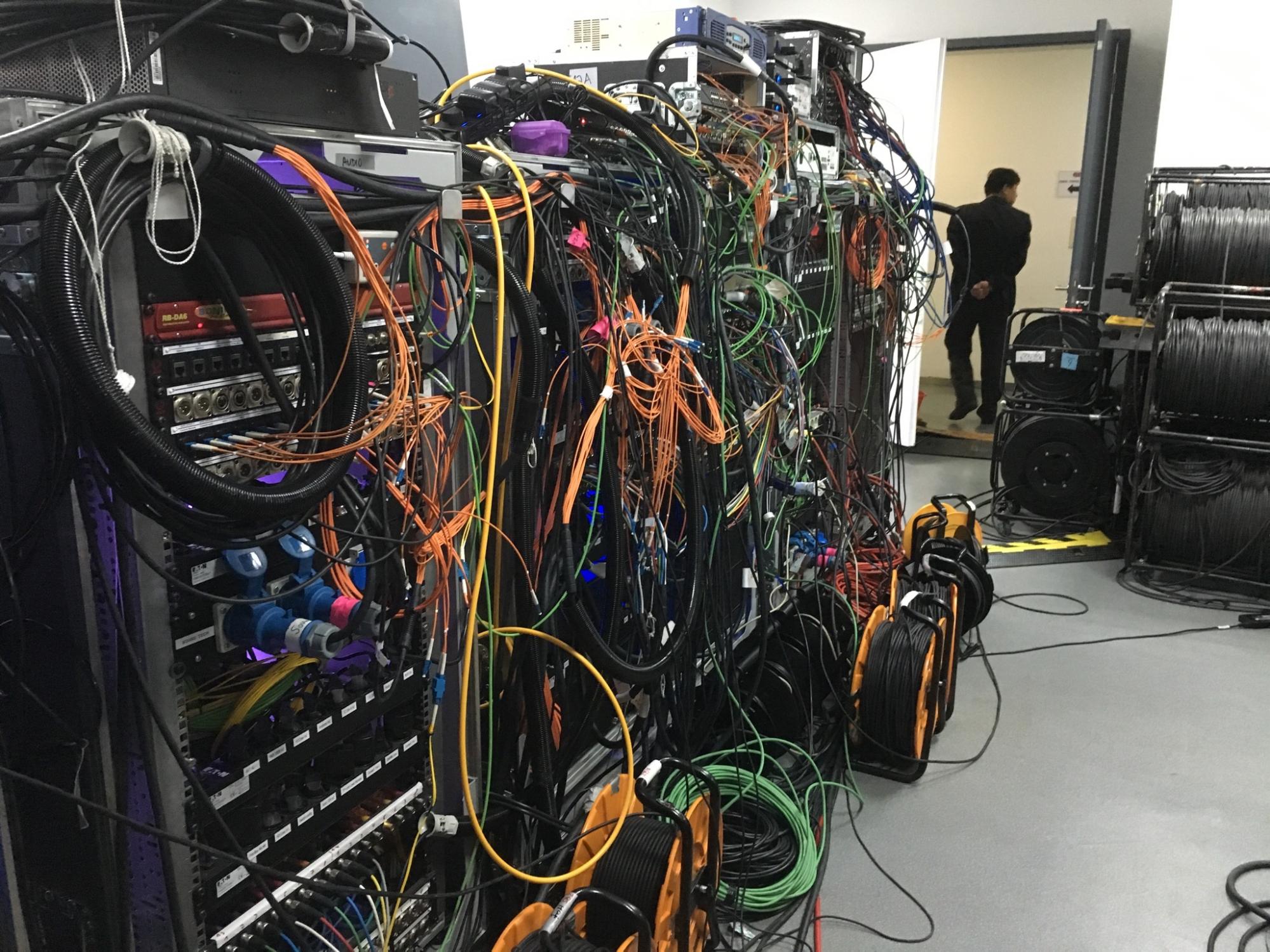

50+ cameras, hundreds of audio sources, miles of fiber optic cabling, and not a truck in sight. The footprint has decreased from having several production trucks onsite with all the operators and creative personnel, alongside several satellite trucks, to today’s iteration consisting of about 10 high density video, audio, encoding, and network racks in one onsite engineering room. This is all coordinated via our real-time, global communications infrastructure, spanning several continents and time zones, including the flagship studio in Los Angeles and partner facilities in places like Brazil, China, Korea, and Germany.

Broadcast engineering racks at Worlds - photo: Michael Caal

Everything from cameras and in-game observer feeds to caster audio and crowd microphones are all encoded to video and audio streams and transmitted back to our primary remote production center in Southern California. Our broadcast engineers use a few different codecs for transporting the various video feeds based on roundtrip time (to minimize latency and deliver the signals as fast as possible end-to-end), importance of signals (primary feeds have built in FEC), and quality considerations. The codecs used are a mixture of H.264 and JPEG2000 streams.

Once it’s all received, we decode the feeds and move them around our production facility to various control rooms depending on the need. Our Worlds feed, for example, is comprised of shots and instant replays, but without any caster audio. That gets added further down the chain. Once all that is done, we broadcast live to fans around the world in over 19 languages, reaching 99.6 million concurrent viewers at our peak.

Worlds 2018 direct broadcast partners - gif: Anthony Wastella

Broadcast Innovations

Our broadcast production team also explores new ways of implementing cutting-edge technologies, partnering with vendors to integrate projection mapping (2016) and augmented reality (Worlds dragon in 2017 and K/DA in 2018) for our opening ceremonies, which allow us to experiment with experiences meant to surprise and delight our players.

The 2017 Worlds dragon was the first of its kind, as multi-camera AR at that size and scale had not been attempted before. It involved 3 cameras spread around the venue floor with specialty tracking to deliver synchronized, frame-by-frame positional data to several real-time augmented reality processing servers. The show producers wanted to be able to use any of the other 30+ cameras located around the arena to capture live performance moments during the opening ceremonies. This presented a problem as the AR processing inherently added some delay. We had to make sure that the delayed cameras used for AR could be cut live with any of the other cameras with no timing offset in between, and audio in sync. We actually received an Emmy Award for Outstanding Live Graphic Design for managing to pull this off.

Fast forward to Worlds 2018, where we premiered K/DA during our opening ceremony, a virtual K-pop band comprised of League of Legends champions, which we brought to life through AR to perform a dance choreography along with the pop stars who voiced their characters. Once again we faced a unique challenge with the added complexity of seamlessly mixing real-life dancers with their AR dancer counterparts. Choreography and camera moves had to be extremely precise in movement and timing.

What’s Next?

This year at Worlds, Riot’s Esports Technology Group will be attempting an industry first - remote transmission utilizing a new compression codec codenamed JPEG-XS. Known internationally as ISO/IEC 21122, JPEG-XS promises vastly improved video compression quality and sub-1ms latency, a 93% decrease from the current industry standard!

Every millisecond saved brings Worlds action to players’ screens faster and at a higher quality. As part of our test we’ll have nine paths of JPEG-XS between our Los Angeles production facilities and each of our venues throughout Worlds. The total bandwidth dedicated to this test is an impressive 2.7 gigabits per second between LA and Worlds. This test is the first step on our path to 4K, and eventually 8K broadcasts.

As part of the JPEG-XS test, Riot will leverage SMPTE 2110 both at Worlds and at our production facility in Los Angeles to distribute both uncompressed (ST2110-20) and compressed (ST2110-22) video signals in a dedicated network segment. ST2110 promises more flexibility for content creators like Riot who heavily leverage IP networks.

And... Cut!

Once we have everything set up, it’s showtime!

From networking infrastructure to broadcast requirements to servers and pro player PC fleets, we continually iterate on new technology at scale according to the needs of our events, perfecting our process and delivery along the way. From the humble beginnings of League of Legends esports at Dreamhack to the spectacle of the Bird’s Nest and Munhak stadium in the last few years, the Esports Technology Group has evolved along with the tech we’ve been developing, and we will keep supporting the largest esports in the world for years to come. Keep your eyes open, there’s some exciting stuff down the line. We can’t wait, and we hope that you’ll accompany us on this journey!

Thanks for reading! If you have any questions for us, feel free to comment below!