Bug Blog: TFT Bugs and Patches

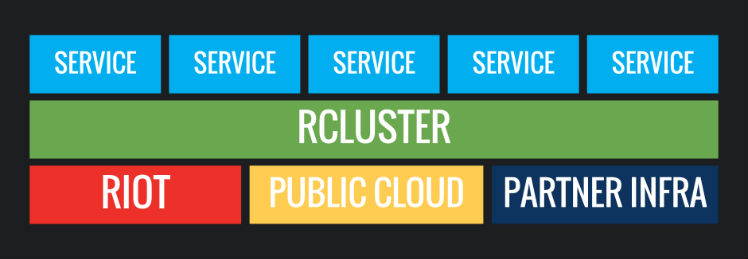

In this article, I’ll be describing how we handle patches across PC and mobile for TFT, and how this relates to quality assurance. Later, I’ll tag in my engineering counterpart, Gavin Jenkins, to give a super techy point of view on patches, and we’ll dive into two use cases that demonstrate different types of patches and how we deploy them.