Bringing Features to Life in Legends of Runeterra

Hi there! I’m Jules Glegg, a Software Architect at Riot, and I’m one of the engineers who helped create Legends of Runeterra. LoR is a strategy card game set in the League of Legends universe - and it’s our first fully realized standalone game since League of Legends came out just over a decade ago!

A whole new game means a whole heap o’ new technology. While we won’t be able to cover all of it in one go, I’d love to give you a glimpse at how we add features to LoR. I’ll describe our process of making it work so we can playtest it, move on to making it presentable using our suite of artist tools, tweaking it for balance, and finally making it shine by adding motion and sound before sending it out to players.

First up, some quick facts about LoR:

-

It’s created in Unity.

-

It runs on Windows PC, Android, and iOS.

-

Almost all code - both client and server - is written in C#. C# is a statically-typed language that prioritizes correctness and legibility. Designers are able to use Python to create scripted content such as cards and quests.

-

We use Git and LFS for version control. LFS is important because games contain huge piles of binary data for textures, audio, vendor tools, and so on.

On the Shoulders of Giants

Just like its big sister League, LoR is set up to ship a patch every two weeks. This means it’s super important for us to keep the game’s main development branch stable, and to ensure that any high-voltage experiments with new features or gameplay take place in their own development branches.

Let me tell you - there are benefits to being the second game out the door. Our friends in the Riot Platform Group have invested enormous effort into making sure all our “Research and Development” titles can take advantage of the infrastructure that already distributes League of Legends to players around the world. Our team gets a lot of stuff for free, including the new launcher which provides us with ultra-efficient patching, push notifications, and more - all based on the League Client architecture.

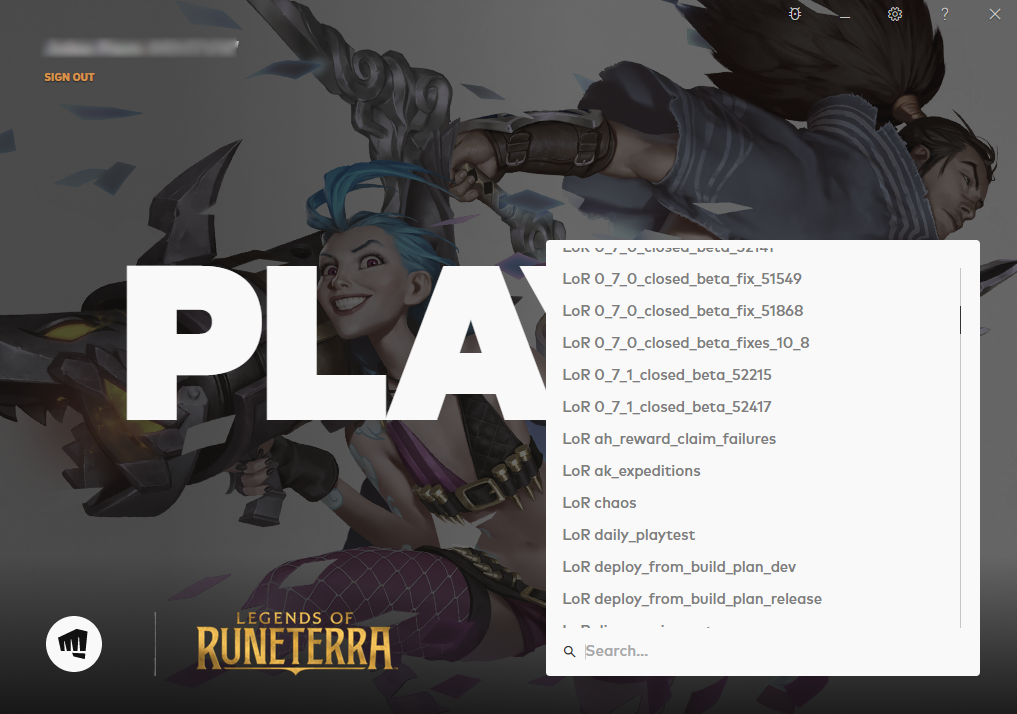

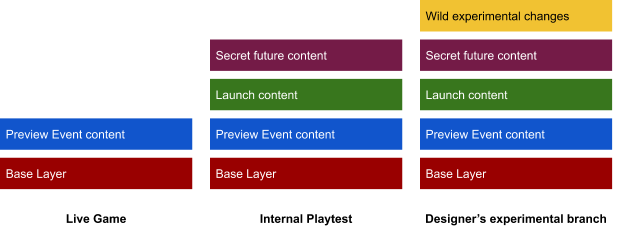

This strong foundation allows the LoR team to work in ways that simply aren’t available to a lot of teams. We even use the launcher internally for playtesting! Ours just looks a little more... chaotic:

Yep - those are all Git branches. Any development branch, from the smallest bugfix to the grandest of overhauls, can be fully deployed and patched out on its own environment for testing. Game designers are able to make experimental changes to the game and playtest them at a team-sized scale within a couple of hours - this is a game-changer for iteration.

Make it work

Once we have a development environment all to ourselves, it’s time to get to work! By now, someone on our design team will have a rough proposal for a feature they think belongs in the game. They’ll say something like:

“We really want players to feel encouraged to experiment with new decks in LoR, so let’s make sure they have a steady source of new cards to try in their decks as they play.”

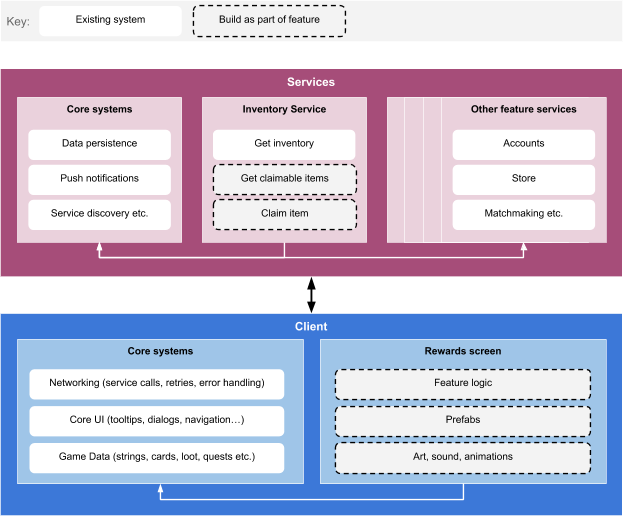

Well, there are a million and one ways we could implement that. And we won’t really know we’ve chosen the right one until we playtest it, so we’re probably going to have to try a few different approaches. We needed to build the game in such a way that allows us to experiment quickly. This means ensuring that as often as possible, adding features only involves making stuff directly related to those features. We’re not perfect on this front, but the team has made significant strides in this direction:

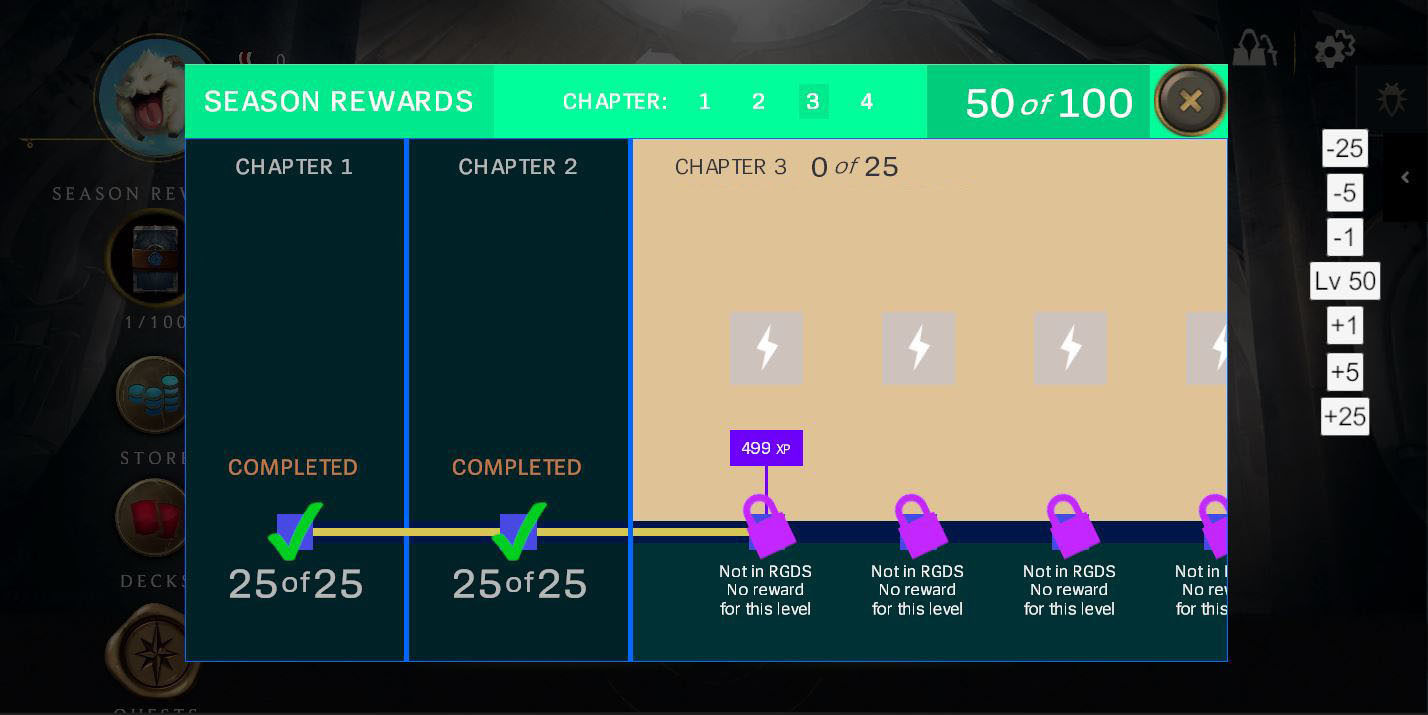

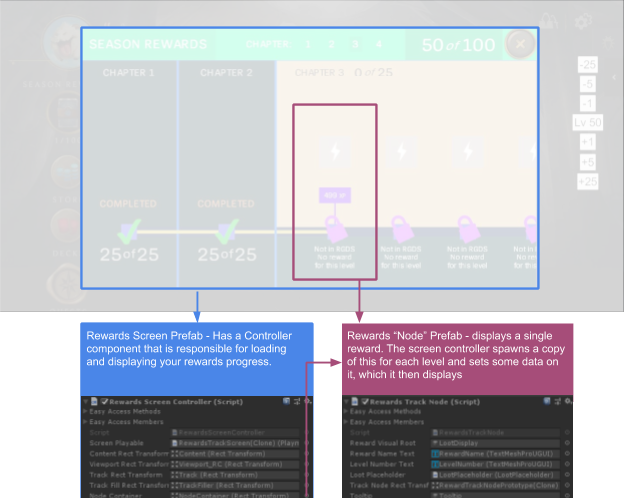

The first prototype for our feature is usually objectively hideous but fully functional, and this level of polish is fondly referred to as “developer art” by the team. Here’s an early iteration of LoR’s Rewards screen, with some developer tools that enable us to try out the experience at different levels. This version of the experience was chaptered, and would have been thematically presented as a journey across the world of Runeterra:

To understand how this beautiful, pastel-tinted developer art prototype was made, we’ll need to understand four common Unity concepts:

-

The “world” inside a Unity game is made up of GameObjects. GameObjects can contain other GameObjects, and can be positioned in the world, but they don’t have any real behaviour of their own. Compare them to a DIV element in HTML.

-

GameObjects only do things when you add one or more Components to them. Components can turn a GameObject into almost anything - a camera, a player character, a card, a button, a sound emitter, or anything else we can dream up. Components are written in code but they can be assigned to GameObjects in the Unity Editor.

-

Components can be given Properties to customize or configure their behavior. Properties can be plain values like strings or numbers, or they can point to other Unity objects, allowing Components to communicate with one another. Properties are defined in code but can be hooked up using drag-and-drop in the Unity Editor. This is the means by which engineers provide the groundwork for artists.

-

Arrangements of GameObjects, Components, and Properties can be saved as a Prefab, allowing us to spawn multiple instances of it as needed. You can think of them as templates.

Let’s take another look at that screen through the lens of these Unity concepts. In this situation, an engineer has created a Component named RewardsScreenController, which is responsible for loading and displaying the player’s progress and rewards. In order to do that, the game needs to know which GameObject will contain the rewards, which Prefab to use as a template for the rewards, which text label needs updating to show the current level, and so on. All of these are exposed as Properties on the Component, allowing our artists to play with different layouts and visual styles - all they have to do is drag and drop things onto the Component to connect them:

Make it presentable

The level of prototyping shown above is clearly not shippable to players but it’s close enough for the team to try it out and see if what we’ve made feels fun. Assuming we’re happy with the result, we’ll try out a slightly more polished version - and if we’ve done our work right, this can be done with little to no change to code.

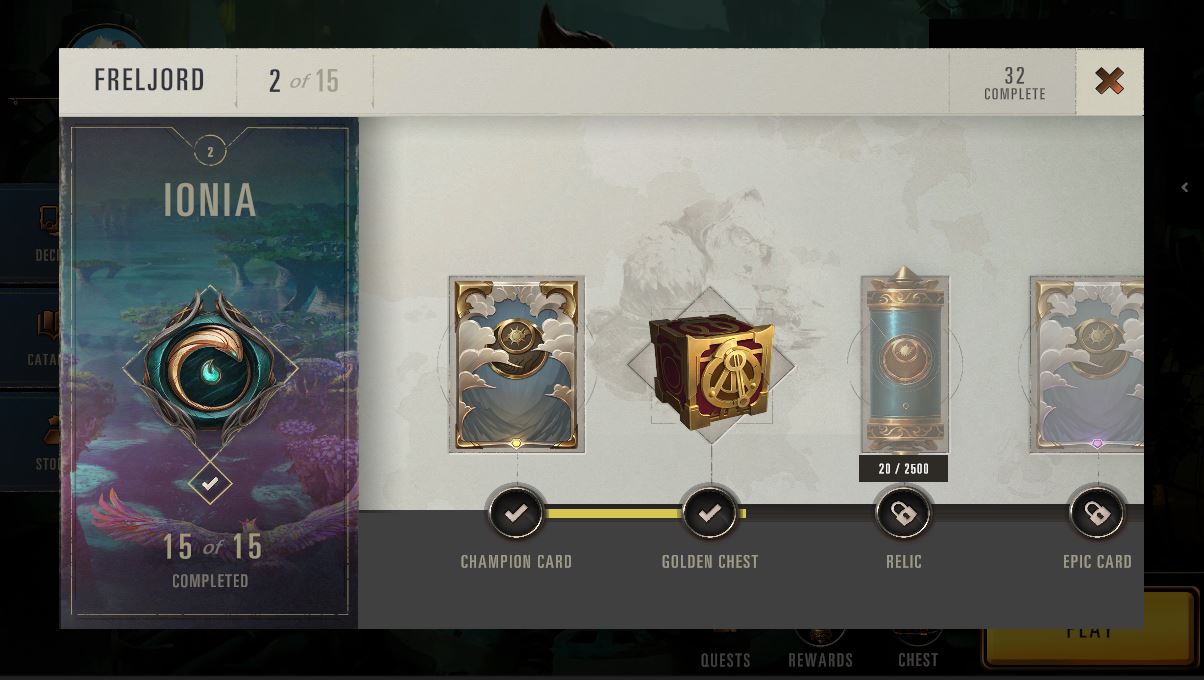

Each Component in the Rewards screen is wired up to a variety of text fields, image placeholders, and Prefabs it should use when populating its content. This is absolutely ideal because it permits an artist to come along later and massively overhaul the screen’s appearance - which we did, because this design felt good at the time. The screenshot below shows the Rewards screen after an artist had spent some time fixing up the developer art:

Early iteration of the Rewards screen, this time after an artist has fixed things up.

Early iteration of the Rewards screen, this time after an artist has fixed things up.

The practices we just outlined serve us really well for bespoke assets limited to a single screen, but it’s also important for us to give artists the power to drive consistency between screens. It’s incredible how quickly an out-of-place font size here or an outdated button there can make a game feel unfinished or poorly made.

Consistency Tools

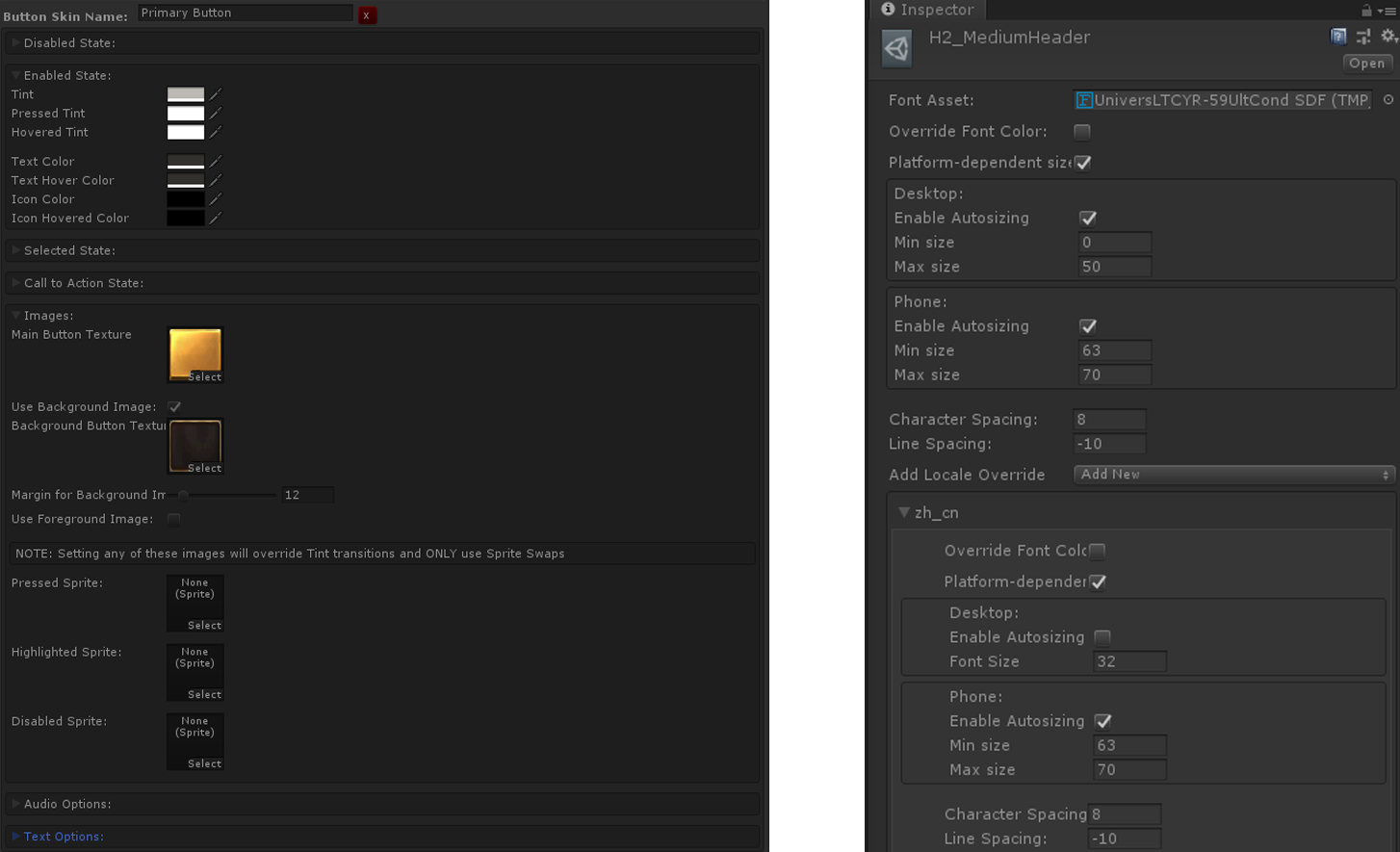

Some of this can be achieved by completely removing certain responsibilities from individual features in favor of using core systems (such as dialog box management) which provide the same consistent experience to each feature. The rest, including fiddly things like text and button formatting, need a more local solution. Thankfully, the Unity Editor is very versatile, and can be extended with new UI totally unique to your game. We combine this with ScriptableObjects (Unity’s lightweight data container) to put tools right in the editor that help artists with these common tasks:

In order to format text consistent with the rest of the game, artists need only add a “Styleable Text” component to any GameObject and drag in the style they would like to use. It’ll be kept up to date automatically if we revisit the style later. There is a tradeoff here - updating a style can involve testing large chunks of the client to ensure you didn’t break anything. We’ve found that this tradeoff is worthwhile to ensure that we have a limited, consistent set of text styles in the game.

One game, hundreds of devices

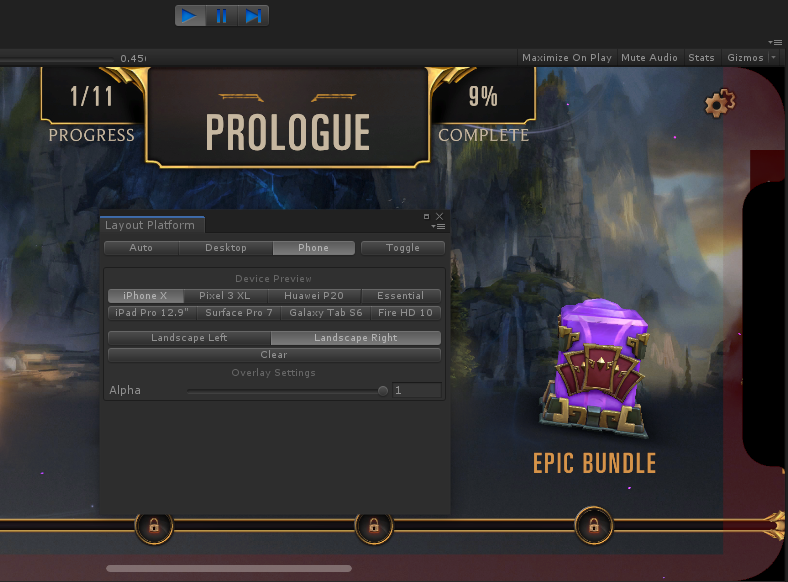

You may have noticed that the text style editor allows artists to choose different sizes on mobile platforms. This is super important for accessibility, as the smaller footprint and higher resolution of many mobile devices can leave text illegible in many cases. And beside the challenge of screen size, we also need to be sensitive toward the ever-growing number of devices that play with the screen’s shape - puncturing it with floating cameras or impinging on its visible area with a camera notch. So that artists can test their artwork under a variety of such conditions, we added a simulator tool to Unity that throws the game into a device-appropriate aspect ratio and loads in a notch image to indicate which (if any) content will be obscured in various device orientations:

This simulator tool comes paired with a tiny Component which artists can place on any UI object to automatically constraint it from entering the “unsafe” area, depicted above with a red tint. This tool is crucial for speed as it helps us (mostly) avoid the costly process of building and deploying the game to a physical device just to test out a minor layout or formatting change.

Make it balanced

Code and art make the feature, but content makes the game. And because we want to give players a lot of content over time, we need to give our game designers a solid authoring tool. Happily, designers on League of Legends have been making and delivering content for many years, and they’ve already created the tools to do it.

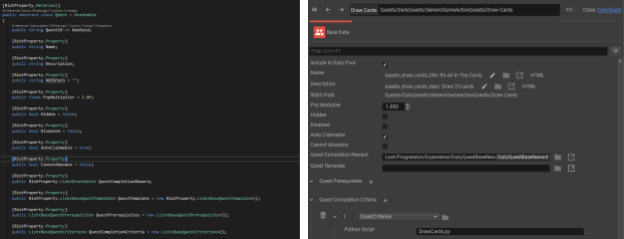

Just like League, our game uses Riot Game Data Server for authoring every piece of content in the game - from cards to quests, from tutorials to chests. As we learned from Bill Clark's post about RGDS, the system is fed by a definitions file that tells it about all the different types of content in the game. By replacing the League definitions file with one generated from our C# code, the Riot Editor tool happily turns into an editor for LoR content:

You might be wondering why, if we use Unity ScriptableObjects for so much client data, we don’t use them for content. There are two reasons for this - for one, content needs to be available to both the client and our hosted services, so Unity-specific tech won’t meet our needs. And two, so we can use RGDS’ killer feature: Layers.

Layers allow us to neatly partition content so that not all of it goes live. Stuff we’re still working on isn’t shipped at all to the live game (sorry, Moobeat) while still being accessible on our internal environments for playtesting. This same system can be used to enable risky experimental changes to the game (like, say, making She Who Wanders a 1-cost) on select environments for playtesting:

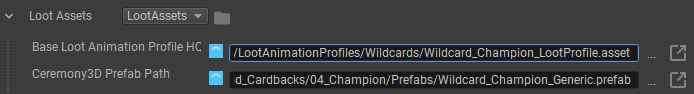

Sometimes, game content will have associated art assets. Cards and loot are great examples - every card has a (potentially huge) bundle of art, VFX, animations, and audio associated with it, and every loot item needs a 3D model and set of animations to celebrate you receiving it. This is an awkward problem to solve because it sits right at the intersection of Unity’s built-in asset management and our studio-specific data tools - we want artists to be able to author content in-editor, but we also need to avoid shipping assets related to all the cool future content living in unreleased RGDS Layers.

The solution is certainly a compromise, but it has been serving us well - we extended the RGDS Editor to support fields that directly refer to Unity Prefabs (for art) and ScriptableObjects (for data) on-disk. Our build process ensures that Unity assets referenced in the pertinent Layers are shipped, and those in unpublished Layers are kept hidden for the time being.

An artist creates a reward object as two files - a Prefab containing the 3D model, and a ScriptableObject defining animations for opening, revealing or upgrading the object.

By combining RGDS with our system of deployable Git branches, it becomes possible for any content or systems designer on the LoR team to rapidly deploy their own instance of the game with any experimental change they choose - no engineer required!

Make it shine

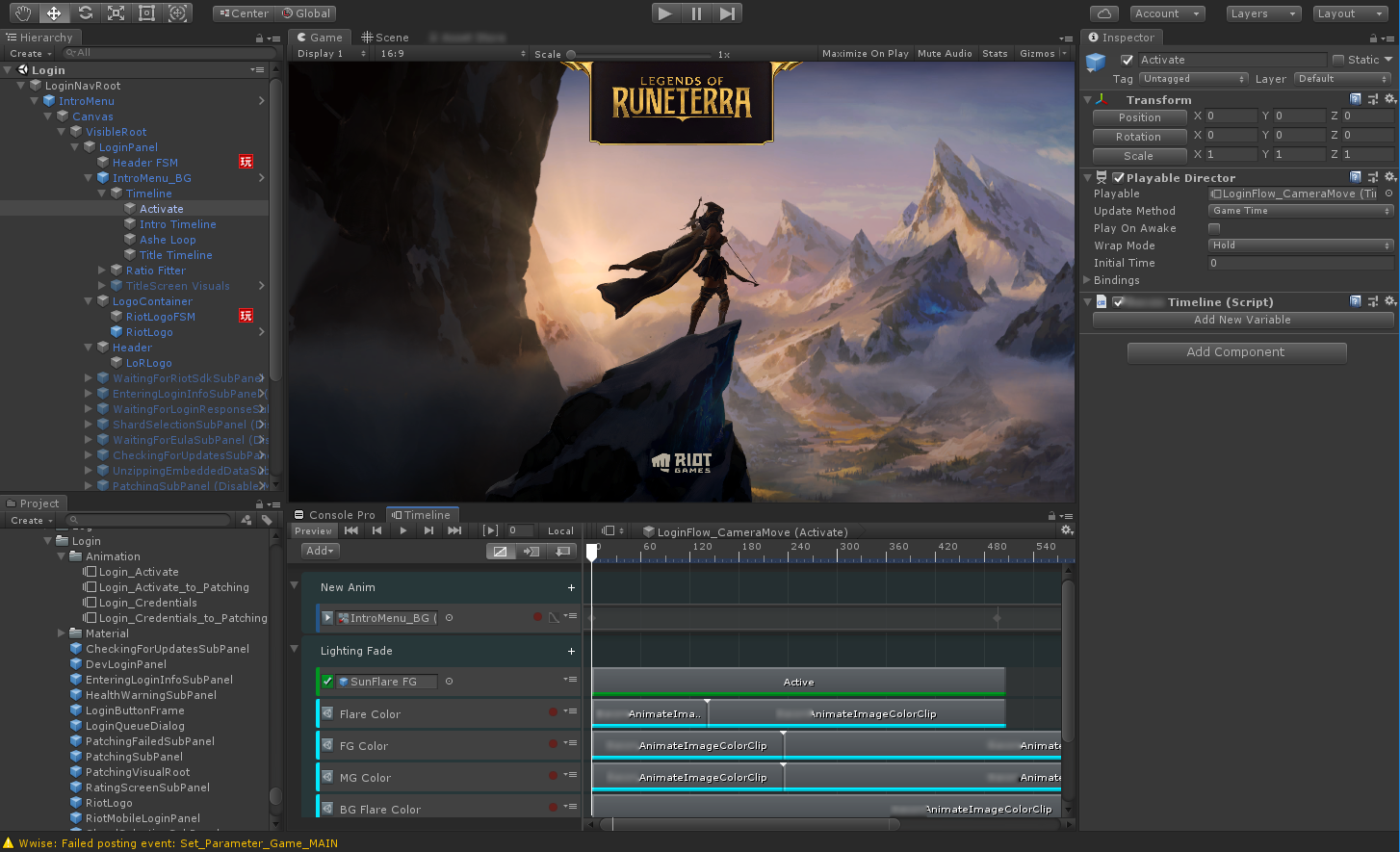

By now, our feature is fully playable on an internal environment, it has some nice art, and it looks good in screenshots, but something is still missing. There’s no life, no motion, no sound. That means it’s time for our motion graphics artists to get involved.

Just as with the process of taking a developer art prototype to a more realized static art form, this process begins with a little engineering work but rapidly transitions to a very artist-led iteration loop.

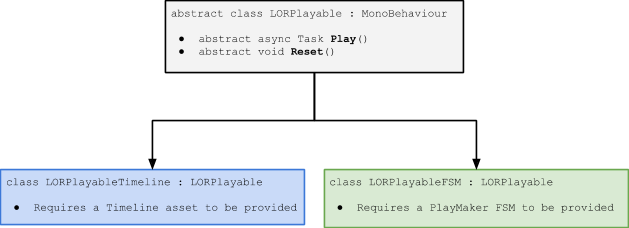

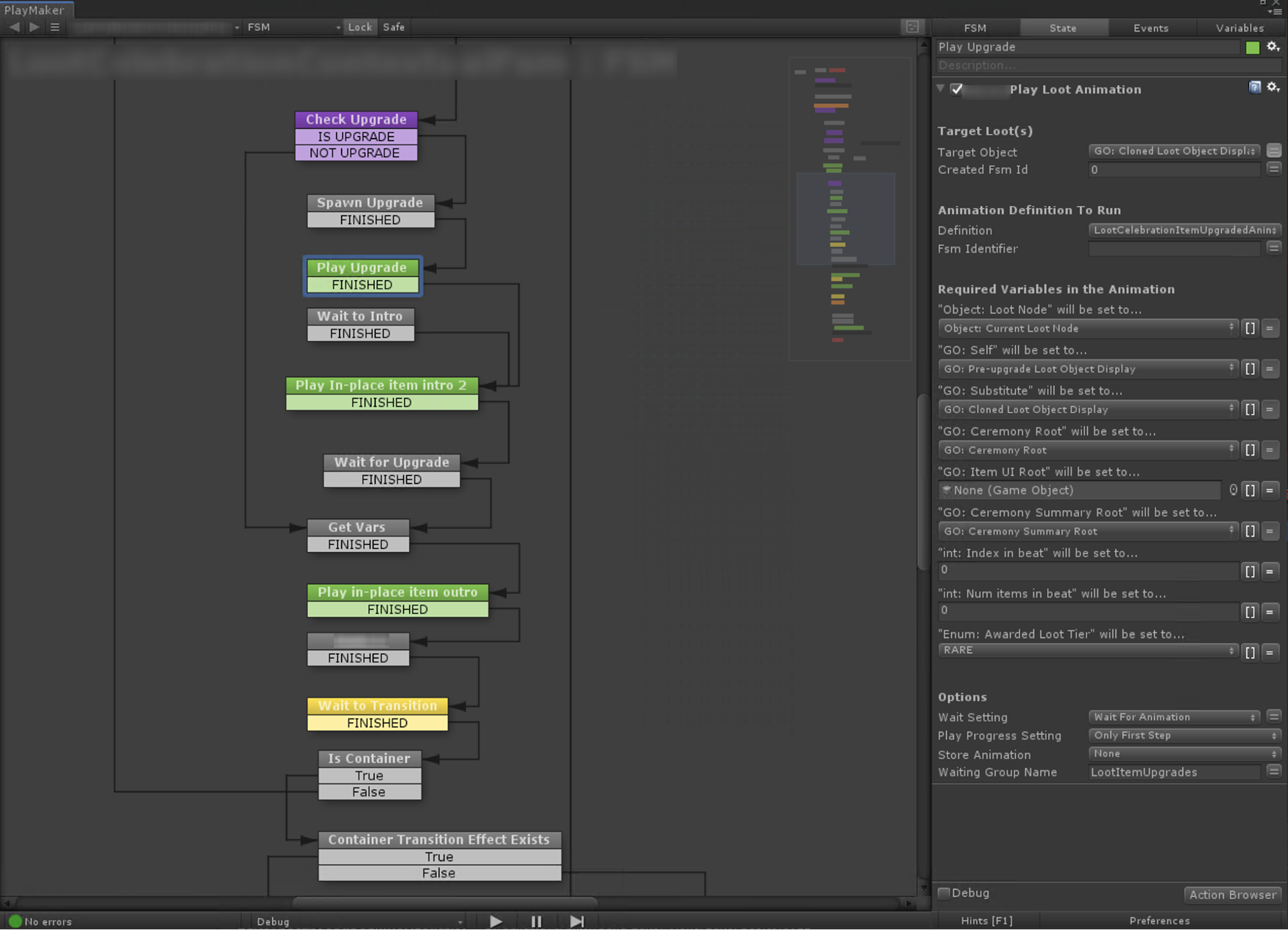

At the highest possible level, an engineer adds Properties to their Components that allow some form of animation to be provided. The engineer doesn’t know the animation’s length or content, only that it should be triggered against a particular set of GameObjects when a particular event occurs. For example, when the player navigates to a new screen, we’d play an artist-defined outro animation on the old screen and an intro animation on the new one before allowing further actions to take place. To complicate things, LoR uses a couple of different systems for animations - Unity Timeline for simple linear animations, and PlayMaker (a robust visual FSM tool) for more complex animations that contain state and logic.

We want artists to be able to use the right tools for any given situation, so we avoid the use of Properties that directly specify which animation system gets used for a particular case. Instead, we provide a family of components that hide the artist’s choice of animation tool from the engineer, and provide a common interface for engineers to write code against:

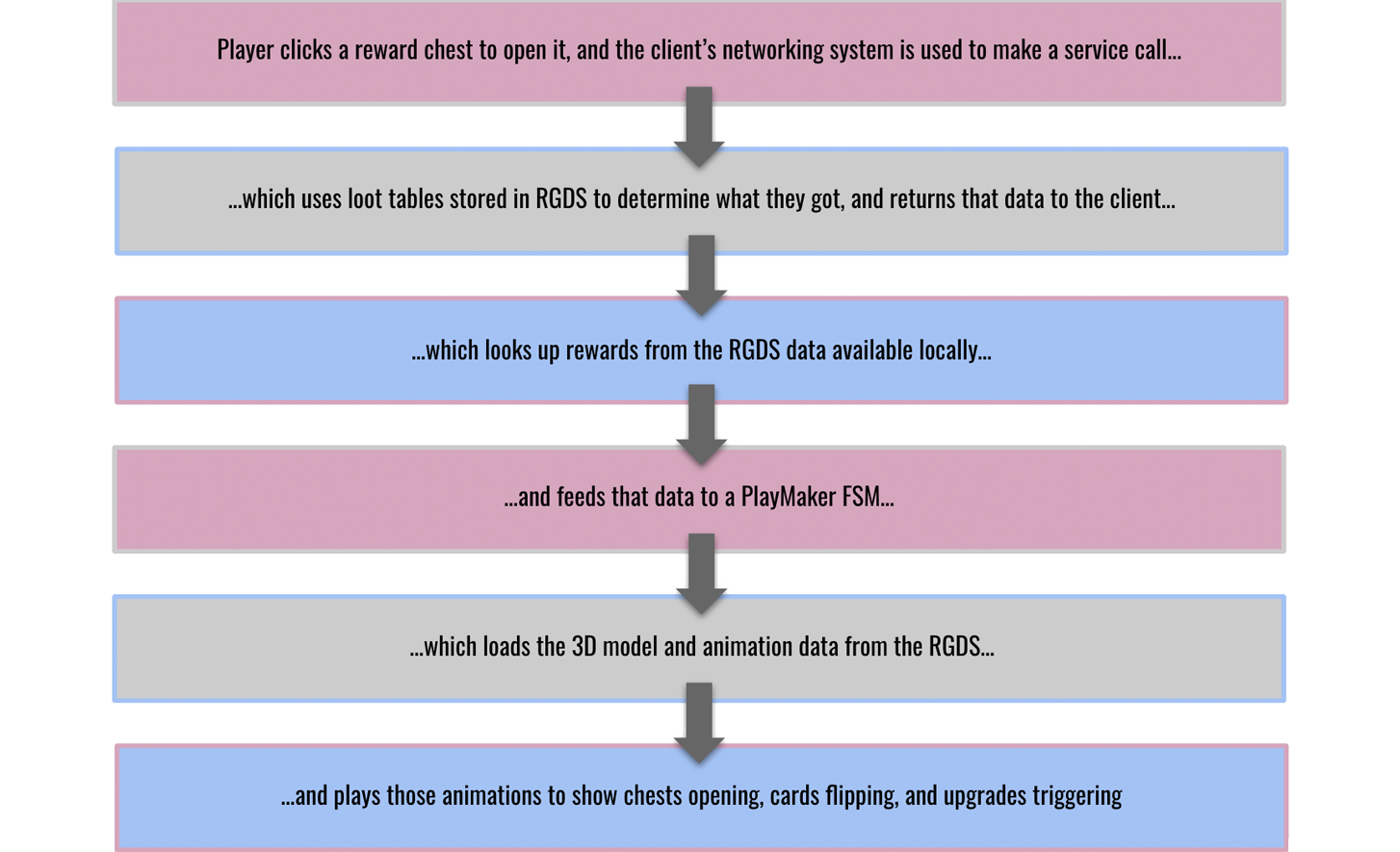

While Unity Timeline is pretty straightforward, the world inside a PlayMaker FSM is more fluid. Here, artists can freely combine and experiment with small building blocks (called “Actions”) which an engineer provides ahead of time. LoR’s loot ceremony is a particularly complex example, as the entire animation happens inside one of these artist-editable FSMs, combining all the concepts we’ve discussed today:

Rewards are a pretty extreme example when it comes to iteration and testing. There’s a lot of services-side stuff that needs to happen when rewards are claimed, and there’s also a component of luck involved, so testing a rarer item could be problematic. This kind of problem is an ideal candidate for specialized tooling. In the case of rewards, we give artists a special test scene (like a secret, unshipped level) that includes tools for simulating any combination of rewards they would like:

Make it public

Once things are really moving and singing on-screen, we’re perilously close to putting the feature in players’ hands! From here it’s a matter of finding as many bugs as possible, playtesting to make sure everything feels right, and making improvements by revisiting some or all of the steps we’ve covered in this article.

A final, polished Rewards screen!

A final, polished Rewards screen!

It’s been so incredibly exciting to see Legends of Runeterra land in players’ hands, and the response has been truly overwhelming for all of us on the team. There are so many more exciting things coming, from more behind-the-scenes tidbits like the ones in this article to a jam-packed roadmap of content and features. Thank you for reading, and thank you for playing!

If you have any questions about this article or about other aspects of LoR’s technology, feel free to comment below.